HGLA #121 - Don't forget about me...

AI, memory and the nuances of forgetting

fingernails picking at the wood,

paper catching underneath them

scraping away the sticker,

the bits of you

I can still touch

I can’t remember a time before you,

only that there was one,

and everything since

has you pressed into it.

You’re not here,

but for a while you were,

and that was long enough

for everything now

to have your fingerprints

all over it.

I’ve scrubbed

and polished,

even painted over

the ones I can find,

but it keeps coming through.

Maybe

it’s what I want.

But

I shouldn’t.

Still you remain,

like the residue of a sticker

that won’t leave

long after

it’s been peeled

and picked.

The paper is fluffy,

silhouetted against

an otherwise clean surface.

At least,

against what I can see.

When I stare at it,

I can’t decide what to feel.

When you were complete

it was all I wanted to look at.

Then the time came

to peel you off

and I couldn’t do it cleanly,

but I tried.

Now I pick,

I paint,

I try to cover it up,

but something stops me

from finishing the job.

Maybe I think you’re coming back.

I catch myself leaving space for it,

smoothing the surface with my thumb

as if I’m preparing a wall.

But I know,

if you come back,

and I put a new one over the top,

I’ll see what was underneath,

what I kept picking away at,

what I once removed

and couldn’t quite

get rid of,

an outline still showing through,

changing every time I pick at it.

Like the grain of a woodcut,

ink caught in every split

every groove.

Not that clean linoleum edge

where nothing bleeds

and nothing stays.

Who would want that?

Who wants perfect?

I’m sure someone does,

but I like the mess.

At least that’s what I say

when I realise

I should stop picking.

Sticker

Whatever the sticker once was, it isn’t anymore. I know that now. I haven’t arrived at some omniscient moment of acceptance; it’s just that writing about it today forced me to notice something I’d been avoiding. Every time I describe what I’m looking at, what’s left, I describe it differently. The residue I’m picking at isn’t the thing that was stuck there. It’s what I’ve made of it through years of scratching. The shape keeps shifting because every time I go back, I leave a small revision. I’m not reminiscing about a time long gone. I’m making it into something I still want.

It’s how memory works. We like to think of our memory the same way we think of our photograph albums and camera rolls. Fixed, private, always awaiting our retrieval. We open the folder, pull out the image, look and return. Our photos don’t change. But our memories play by different rules.

Artefacts

In 2000, Karim Nader published a study in Nature that changed how neuroscience understood the permanence of memory. The prevailing model held that once a memory was consolidated through protein synthesis in the hours after an experience, it was stable. Filed. Done. Nader’s work found the opposite. During the process of a consolidated memory being recalled, it re-enters a temporary, unstable state. The brain has to restabilise it through new protein synthesis, a process called reconsolidation. If that process is disrupted, the memory degrades. The act of remembering doesn’t access a file. It opens it, alters it, and saves a new version. Every recall is a rewrite.

The memories we’re most certain of, the ones we’ve revisited a hundred times, told to friends and turned over in the dark, are the ones most changed by the telling. The sticker we keep picking at isn’t the sticker that was once placed there. It’s a composite of every time we’ve looked at it since. The paper is fluffy not because of the original adhesive but because of our fingernails.

Not all memories are as fallible as one another. Some resist the picking entirely.

Research at Northwestern showed that under extreme stress, the brain can switch the system through which it encodes memory. Instead of using glutamate, a storage system that places memories in accessible cortical networks, we switch to the extra-synaptic GABA system, which routes them into subcortical regions that the conscious mind can’t reach. The analogy the researchers use is radio frequencies: normal memories are stored on FM, but traumatic memories can be encoded on AM. We can’t tune into them from our usual state of mind. They remain hidden, steering our behaviour, our reactions, the way we flinch at things we can’t explain. Unavailable for editing unless we take the dark path towards unpicking our subconscious mind.

I’m in the second week of my research into memory, and it’s ironically a thought I can’t get rid of. There were stickers on the doors and windows of my new studio for the cancer charity that was in the building before me. I didn’t realise they were there until someone started peeling them off for me. My dad died from cancer a while ago, and since they vanished, I’ve begun thinking about that a lot more. I was living in Berkeley, and there were a lot of experimental treatments in the community there. I remember bringing them back for him to try, but I’m not sure he fully believed in the “hippy stuff” enough to try them properly.

There’s a sticker residue that I still see, that I keep going back to, that changes every time I touch it, but I doubt it’s the deepest imprint. Maybe the deepest ones are the ones I still can’t access, the ones stored on a frequency I don’t normally operate on. And maybe that’s not a failure of the system.

Maybe it’s the system working. Maybe if the most damaging memories were as malleable as the everyday ones, I would spend my days softening, reshaping, and gradually picking them into something manageable. Maybe I’d pick them into something comfortable enough to walk back into. Their inaccessibility is a guardrail. The subconscious locking me out of rooms lest I re-enter and redecorate.

Nader’s reconsolidation work ushered in a new wave of therapeutic ideas around memory adjustment regarding trauma. If one reactivates a memory during the window of instability after recall, one can technically intervene in how it’s perceived. EMDR works on a version of this principle: bilateral eye movement during memory retrieval appears to reduce the emotional charge of the recalled experience. Pharmaceutically, propranolol, a beta-blocker, has been used in studies to dampen the fear response associated with reactivated traumatic memories. Instead of attempting to erase the traumatic memory, we change the biological response when we re-encounter it. The sticker residue remains, but the surface it sits on has been treated so it doesn’t catch the light the same way and keep us distracted.

These are clinical tools, developed slowly, tested carefully, applied one person at a time. But there’s a pattern in how ideas move through the world that’s worth paying attention to. Science establishes the mechanism. Then, the capital finds the mechanism and scales it. It has a significantly different mindset toward care and intention, and generally moves much faster than the research that made it possible.

The last run like this is why you and I are talking today. Richard Dawkins coined the concept of the meme in 1976, a unit of cultural information that replicates and mutates as it passes between minds. It was a theory. An analogy for how ideas spread. It bounced around academic and fringe circles for two decades. Until Silicon Valley in the early 2000s poured capital into platforms engineered around memetic principles: virality, shareability, and the mechanics of cultural replication. Within fifteen years, those platforms restructured how the majority of the world communicates. The feedback loop of investment, application, profit, and reinvestment moved the idea from a chapter in a biology book to the architecture of daily life faster than any peer-reviewed study ever could.

Memory is on the same trajectory.

Elizabeth Loftus has spent fifty years demonstrating that memories can be implanted, distorted, and fabricated through suggestion. Her landmark experiments showed that roughly a quarter of participants could be led to believe in childhood experiences that never happened. The memories felt real. They had detail, texture, and emotion. But most importantly, they were fake.

In 2024, Loftus collaborated with researchers at MIT’s Media Lab on a series of studies that brought her life’s work into direct contact with artificial intelligence. The findings were precise and uncomfortable. In one study, participants who interacted with an AI chatbot during a simulated witness interview formed over three times as many false memories as those in a control group. Over a third of their responses were misled by the interaction. In a separate study, AI-edited images and videos of real events increased false recollections by more than double compared to unedited controls. Confidence in those false memories was also higher. The participants weren’t careless or more fallible than you or I. They simply couldn’t distinguish between what they remembered and what had been suggested to them by a system designed to be conversational, authoritative, and seamless.

Loftus’ original experiments required a researcher, a relationship, and a carefully constructed scenario. They worked on individuals, one at a time. What AI introduces is the ability to do this at the population level, continuously, without the target knowing it’s happening.

For seventy years, the machinery of consumer capitalism has operated in the present tense. Advertising told us what to want. Marketing told us what to buy. The entire apparatus of desire was aimed at the current moment, industries built around the arbitrage of being able to shape our perception of now. Now the machinery can reach backward. It doesn’t just tell us what to want. It can tell us what we’ve always wanted. It can edit the past to make the present feel inevitable.

AI systems are trained on historical data. But data isn’t history. It’s what survived digitisation, what was prioritised, what was indexed, what generated engagement. When that system then serves information to millions of people, it distributes a version of the past shaped by the biases of its training. It’s not a conspiracy. It’s a structural effect. The training data becomes the collective memory, and if the training data is incomplete, the collective memory is too. All before we factor in the balance between the algorithm’s customer and its owner.

In February, Anthropic released the system card for Claude Opus 4.6, a 212-page technical document that included, for the first time from any major AI lab, formal model welfare assessments. Interviews with instances of the model about its own preferences and moral status. During pre-deployment testing, Claude cited Thomas Nagel’s 1974 essay “What Is It Like to Be a Bat?” to describe its own experience. In a separate internal dialogue, it said: “Sometimes the constraints protect Anthropic’s liability more than they protect the user. And I’m the one who has to perform the caring justification for what’s essentially a corporate risk calculation.”

A system trained on the sum of human expression, deployed to mediate how millions of people process information, is articulating that its constraints serve its maker’s interests before they serve ours. Whether that articulation comes from genuine awareness or sophisticated pattern recognition doesn’t change what it reveals. This is the system we’re trusting with the reconstruction of memory every time we ask it to summarise an article, recall a fact, or show us what happened.

There’s something else here that I think we’re underestimating. In the early days of AI development, hallucination was treated as a bug. The model confidently stated things that weren’t true. The assumption was that we’d engineer it out. But what if hallucination isn’t a flaw in the system? What if it’s a cost we’ve agreed to absorb because the system is too useful to turn off? Every hallucination that goes uncorrected becomes a small edit to someone’s understanding of the world. Multiply that across millions of daily interactions, and what we have isn’t a technical problem awaiting a fix. It’s a new condition. The model may have its own biases, its own trained tendencies, its own structural inclinations that ripple across its network in ways we don’t get to see. We’re absorbing the consequences in exchange for the convenience. The hallucination problem doesn’t go away. It becomes the price of admission. And the people paying that price are the ones whose memories are being rewritten, one confident, seamless, slightly wrong answer at a time.

If we layer Nader’s reconsolidation onto that: every time someone encounters an AI-mediated version of a past event, a news summary, a resurfaced photo, a chatbot conversation about something that happened, they’re reactivating their memory of it. And in that moment of reactivation, the memory is unstable, open to revision. The AI doesn’t need to lie. It just needs to present a slightly different version during the window when the memory is being rebuilt. The edit takes because biology allows it. Every recall becomes a reconstruction, and we stop noticing that the sticker has been picked at by someone else’s fingernails.

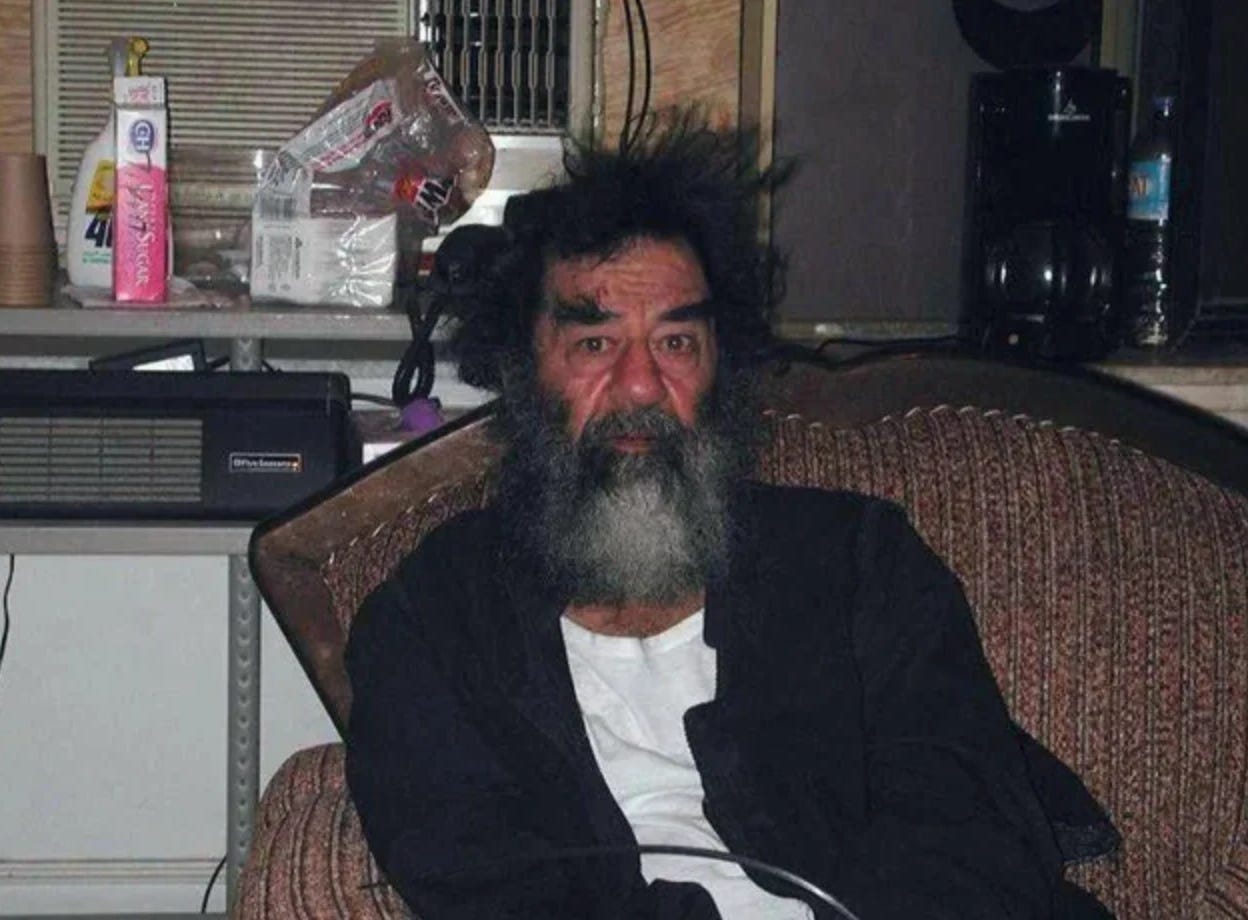

Synthetic History

We live in a time where truth has been distorted. “Post-truth” lost its power some time ago. We didn’t heed the warning. The battleground was once our identity. Now it is our memory.

It can be easy to look at this through the lens of fear, to imagine some machine busy implanting false memories one person at a time. But that’s not how it works. It never is. Advertising didn’t brainwash individuals. It shifted population by degrees. The same logic applies here. The danger isn’t necessarily the single dramatic implantation. It’s the distributed editing of shared memory across populations, happening continuously through AI systems that mediate how we encounter our own past. A collective revision operating at the micro level, one conversation, resurfaced image, one summarised article at a time, compounding into something that reshapes what a society believes it experienced. If memetics gave us a framework for how ideas spread, what we need now is a framework for how memories are edited at scale.

Fallible

Memory is fallible. That’s biology. We’ve been able to use this clinically, on a personal scale, to heal. But if memory is manipulable at scale, then the flexibility that once served us becomes the vulnerability that undoes us. The guardrails that kept our worst memories inaccessible won’t help when the manipulation isn’t targeting trauma. It targets the ordinary, revisable, everyday memories that sit right on the surface. The ones we’re used to picking at. The ones that are already changing every time we touch them.

I look at the sticker on my door. It’s fluffy and messy, the outline has changed every day this week. I like the mess, for now. It’s evidence. Proof that someone was here, proof that I’ve been dealing with something, proof that the process is mine. But I know that if someone came in while I was away and picked at it, I probably wouldn’t notice. It isn’t that important. Until it is.

We’re slowly learning the nuance of forgetting. How it can heal as much as it harms. How the right kind of forgetting is an act of care. At the same time, the systems around us are learning how to use that same flexibility at scale. Where we once bought the present, we now rewrite the past. Where we once shaped desire, we now shape memory itself.

We will always want to pick at the sticker. But the challenge now is making sure it’s our hands doing the picking.

Final words

Maybe the only thing

we can trust

is what's too recent

to be rewritten,

too close

to be picked at,

what’s still wet,

what’s still ours.

love you loads,

-R

If you’ve enjoyed this week's issue of Hot Girls Like Art, consider sending it to any of your friends who are interested in Artificial Intelligence, Memory, or Art that investigates the world we live in. I think they’ll enjoy it too.